Which Ethics? — Consequentialism

Consequentialism refers to the general class of normative systems that determine the rightness or wrongness of an act not in accordance to the rules or principles that motivate or control it, the duties, obligations or virtues, but rather judge acts by their effects, their consequences. There are several types of Consequentialism, depending upon exactly how the value of those effects are measured: Act Utilitarianism, Hedonism or Egoism, Altruism, and so on. There are several good sources on Consequentialism available on the net:

- The Stanford Encyclopedia of Philosophy's Consequentialism article

- The Internet Encyclopedia of Philosophy's Consequentialism article

- The BBC's Ethics Guide article on Consequentialism .

- Wikipedia's Consequentialism article

Most writers dealing with Consequentialist machine ethics deal in Jeremy Bentham's act utilitarianism, and specifically an altruistic version of it, given that the wellbeing of humans, not machines, are of consequence. Machines are, of course, valuable, so damaging them wastes resources, but it is that and not the consequences to them that factor in.

As noted in the previous installment, the work of Michael and Susan Leigh Anderson dealt in its earliest stages with Bentham's Utilitarianism, but was soon shifted to Prima Facie Duty ethics, which is primarily a form of Deontology that bases some of its duties on Rule Utilitarianism or other Consequentialist theories.

Perhaps the most significant advocate for Consequentialism in machine ethics is Professor Alan Winfield, a professor of Electronic Engineering who works in Cognitive Robotics at the Bristol Robotics Laboratory in the UK. The approach that Prof. Winfield and his colleagues have taken has been variously called the Consequence Engine and an Internal Model using a Consequence Evaluator. The most recent paper that I have describing this work is "Robots with internal models: A route to self-aware and hence safer robots". As can be seen from the following list of papers, their work has involved incorporating a number of biologically inspired approached into the development and evolution of small swarm robots.

Formal publications — Robot ethics

- Towards an Ethical Robot: Internal Models, Consequences and Ethical Action Selection

- Robots with internal models: A route to self-aware and hence safer robot

Blog postings — Robot ethics

- How ethical is your ethical robot?

- Towards ethical robots: an update

- Towards an Ethical Robot

- On internal models, consequence engines and Popperian creatures

- Ethical Robots: some technical and ethical challenges

Formal publications — Related topics

- Evolvable robot hardware

- An artificial immune system for self-healing in swarm robotic systems

- Editorial: Special issue on ground robots operating in dynamic, unstructured and large-scale outdoor environments

- On the evolution of behaviours through embodied imitation

- Mobile GPGPU acceleration of embodied robot simulation

- Run-time detection of faults in autonomous mobile robots based on the comparison of simulated and real robot behavior

- A low-cost real-time tracking infrastructure for ground-based robot swarms

- The distributed co-evolution of an on-board simulator and controller for swarm robot behaviours

- Estimating the energy cost of (artificial) evolution

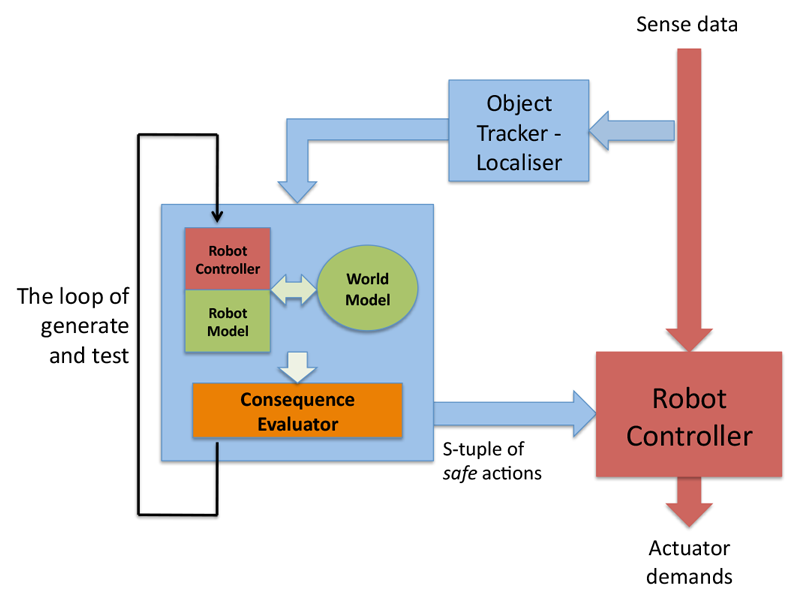

The Consequence Engine (CE), which is at the heart of their approach to machine ethics and safety, is a secondary control process that uses simulation to narrow the possible future actions available to the main control process. This diagram, taken from "Robots with internal models", illustrates the role of the CE.

The blue boxes and arrows to the left represent the overall CE processes. The red ones, to the right, the robot's main control processes. In this architecture, the robot has an internal model of both the external world and of itself and its own actions. The robot's main control system provides it (through a path not shown in the diagram) with a list of possible next actions. Each of these is simulated and its consequences are evaluated. In their design, the secondary system doesn't choose which next action is to be performed, but rather winnows the list of potential next actions to those which are "safe" or ethically acceptable. It returns this list to the main control program, which selects the actual action to perform.

So far, the types of harm that their robots can simulate and avoid are simple physical accidents. Specifically, they have run simulations using two different generations of robots preventing a human (a role played in their tests by a second robot standing in for the human) from falling in a hole. Winfield in various talks admits that this is an extremely constrained environment, but he asserts that the principles involved can be expanded to include various sort of ethical harm (see his talk "Ethical robotics: Some technical and ethical challenges" or the slides from it):

- Unintended physical harm

- Unintended psychological harm

- Unintended socio-economic harm

As he notes, the big difficulty is that each of these is hard to predict in simulations. In order to make the system more sophisticated, one must add both more potential actions and more detail to the simulations, resulting in exponential growth. Even with the benefit of Moore's Law, the limits of practicality are hit very very quickly. Nonetheless, the Bristol team has demonstrated substantial early success.

Like Bringsjord and to an extent the Andersons, both of whom we covered in the previous installment, Winfield comes from a traditional logicist tradition, and not the Model Free, Deep Learning school of Monica Anderson and many others. This means that it is engineers, programmers and architects who are responsible for the internal models, the Evaluator and the Controller logic. This only adds to the scalability issues.

Still, it does provide for one criterion that all of these researchers agree upon. Not only must a system behave in accordance with ethical principles or reasoning, but it must be able to explain why it did such and such, what the principles and reasoning involved are. Bringsjord is concerned with developing safe military and combat robots, the Andersons work in medical and healthcare applications, and Winfield started with simply making robots safe. Researchers in each of these areas, and in fact any academic involved in research, must be concerned with oversight from formal ethical review boards. If the principles, models, and reasoning are designed and implemented by human engineers, then building in an audit facility is easy. True Machine Learning approaches, such as Artificial Neural Nets (ANNs) and Deep Learning, may well involve the system itself creating the algorithms, models and logic. This makes it substantially harder to insure that a plain language explication of the reason for a certain action will be as readily available.

Winfield differs from Bringsjord, however, in being less devoted to the top-down logicist approach. As can be seen, especially in the "related topics" list of papers, the Bristol team is involved in genetic algorithms for software and hardware, robot culture, the role of forgetting and error, self healing and other low-level biology-inspired methods. While he rejects deontology and virtue ethics, he does so on purely pragmatic view, and not in accordance with a manifesto. He writes, "So from a practical point of view it seems that we can build a robot with consequentialist ethics, whereas it is much harder to think about how to build a robot with say Deontic ethics, or Virtue ethics." If as the consequentialist complexities explode exponentially (or worse), Deep Learning or other bio-inspired approaches prove effective in deontological or virtue contexts, then, so long as there is a way to explicate the system's reasoning, in keeping with the needs of ethics review and auditing, one can envision Winfield and his colleagues embracing what works.

In terms of the specific goals of this web site, making Personified Systems that are worthy of trust, it is pretty clear that Winfield and the team in Bristol have demonstrated the core of a Consequentialist system. Whether it can grow with the technology as the Machine Learning and Deep Learning explosions progress is less clear, but as with Bringsjord's DCEC system, Winfield's Consequence Engine certainly provides usable technology that we would be wise not to ignore.